We ran an unblockable swarm of 2,000 cloud phones so agents can use apps like a human

In our previous post, we demonstrated a new architecture for SOTA stealth browser automation.

Android cloud phones face a similar cat and mouse game, but with a twist.

Agent intelligence and mobile infrastructure are orthogonal. You can have the smartest agent in the world but if your phone gets blocked, none of it matters.

Mobile infrastructure hasn't kept up, and the wall agents are hitting isn't software. It's hardware.

Why phones matter

A lot of internet functionality doesn't exist on the web. Banking apps. Dating apps. TikTok posting. Mobile games. Services that only work through native apps.

If your agent needs any of that, it needs Android.

Phone verification is becoming the norm too. Every major platform requires a phone number now. Not just an SMS code, a real phone environment that passes their checks.

Cloud phone providers sell exactly this promise. ARM-based Android instances, spun up with one API call.

We deployed thousands of them. Then we tried to actually use them.

The wall

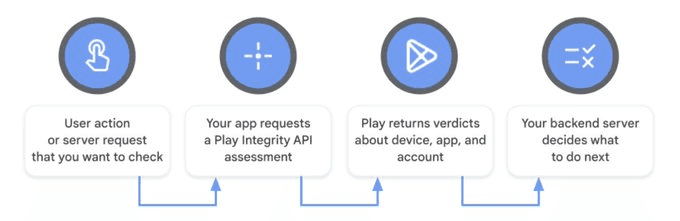

Play Integrity is Google's system for answering one question: "is this a real Android device?"

An API any app can call to get a cryptographically signed verdict from Google's servers. This is what every app uses to verify whether you're a legitimate user each time you enter their platform.

Three tiers: BASIC, DEVICE, STRONG.

BASIC: the device isn't obviously tampered with.

DEVICE: Google believes it's certified with a locked bootloader and genuine firmware.

STRONG: hardware-backed key attestation, a cryptographic key locked inside secure hardware components.

Most apps require DEVICE at minimum. Things like banking apps are starting to require STRONG. And when the check fails the app doesn't show an error. It silently stops working. No login, no account creation, no transactions.

The reality

The first thing we did after deploying our cloud phones was install a Play Integrity checker on each instance.

This is an app anyone can download to see whether a device is passing the 3 tiered verdict from Google's servers.

The result: three red X marks.

BASIC: fail

DEVICE: fail

STRONG: fail

This is a problem. We can't access some of the apps we want to automate.

So we did some digging and found offerings of "real phones" that would pass these Play Integrity checks.

We got deployed these phones and did the exact same test.

The result: three green ✓ marks.

BASIC: pass

DEVICE: pass

STRONG: pass

But the phone environments seemed suspicious. Nearly identical to the original emulated cloud phones we tested.

So we started tearing the infrastructure apart.

Layers of disguise

Turns out the "real phone" being advertised was still emulated!

So how the heck did it pass Play Integrity?

What we found was a 6-layer bypass mechanism. Every major provider runs some version of it and the engineering is genuinely impressive.

The first four layers are all variations of lying about hardware:

Rewriting 942 system properties to impersonate a Pixel 7 Pro

Deploying 49 custom XML files claiming the device has a StrongBox keystore it doesn't have

Patching the Android framework so when any app asks "does this device support StrongBox?", it gets told yes

Masking the security level of all cryptographic operations so software keys report as hardware-backed

But the layer that actually matters is the Play Integrity Fix.

A privileged system app acting as a fake KeyStore provider. It intercepts attestation requests and responds with pre-loaded certificates and private keys.

All layers active:

BASIC: pass

DEVICE: pass

STRONG: pass

Impressive for containers on shared datacenter hardware. But everything depends on one file.

The keybox

Think of it as a phone's passport. A keybox is a bundle of cryptographic keys provisioned to a device at the factory, each with a certificate chain rooting up to Google's hardware attestation CA.

When Google says "prove you're real", the phone signs a challenge with its keybox private key. If the signature traces back to a known Google root, it passes.

Historically the keybox was just a file. Plaintext XML on the firmware. The private key protected by nothing more than Linux file permissions.

If you have a keybox you can make any device claim to be the phone it belongs to. Load it onto a cloud phone, feed it to the integrity fix app, and that container assumes the cryptographic identity of a genuine device.

That's what powers the entire concept.

So we asked: "Where do these keyboxes actually come from?"

The supply chain

Some manufacturers shipped devices with keybox.xml unencrypted in firmware update packages on their own websites. Grab the firmware, extract the factory partition, keybox is right there.

We tested this with Nubia's Red Magic gaming phone line. Pulled valid keyboxes from 6 models spanning 2019 to 2023. Checked every one against Google's Certificate Revocation List.

All unrevoked. The best one from a 2023 Red Magic 8 Pro had a root certificate valid until 2042.

Loaded it onto a cloud phone. Ran the check. DEVICE integrity: pass.

Our cloud phone had the identity of a phone we never physically touched, pulled from a public firmware image anyone can download!

Beyond firmware there's a whole sharing market.

Telegram and XDA communities trade keyboxes openly.

Some operations buy cheap phones in bulk, extract the keyboxes, and resell them.

We had the supply chain. We had the bypasses. We had cloud phones passing all three integrity levels.

The burn rate

Every keybox is a finite resource.

Google maintains a revocation list of every compromised keybox. When yours gets added, every device using it fails.

They find them by detecting one "device" signing in from dozens of countries in a day, or the same attestation key appearing in millions of requests.

Keyboxes burn. You replace them. They burn again. Running a swarm means managing this constantly.

Google is also eliminating static keyboxes on newer devices entirely. The factory key gets locked inside the TEE, a secure processor physically isolated from the main OS. The private key never leaves the hardware. No file to find, nothing to extract.

This doesn't kill the supply overnight. Older devices still have extractable keys and the revocation system has latency. But the window is narrowing.

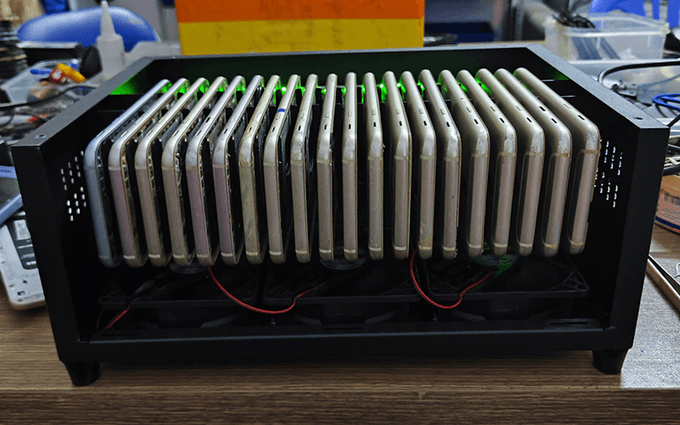

Why real phones aren't enough

"Forget cloud phones. Buy real hardware. A few hundred Pixels, genuine keyboxes, valid Play Integrity out of the box."

They work. But they're expensive to source, difficult to scale, and each phone is exactly one identity.

$200-800 per device, power, space, networking. And if usage patterns aren't handled carefully Google's risk engine burns the device permanently.

Real phones have a role but they're not the foundation. A hybrid model of real phones for keyboxes, scaled with emulated cloud phone instances is the best of both worlds.

What's next

These cloud phones run real Android on real ARM hardware with real Chrome. Real browser fingerprints, real touch events, real mobile user agents.

For web automation this fingerprint quality is a massive advantage over any standard patching approach. And websites can't access Play Integrity the way native apps do.

It's an Android API. The browser sits one level above it on the stack. Antibots on the web are stuck evaluating fingerprints, behavior, and network signals.

A game of cat and mouse, but this time in our favor, and we already know how to win.

Most cloud phone providers today operate overseas with questionable infrastructure and legitimacy.

We're building our own Android cloud phone offering in the US.

The same infrastructure thesis behind Driver: real hardware, reliability, and maybe even some distributed networks.

If you're interested in stealth browsers or future cloud phone infrastructure, check us out!